“We can’t refuse to look at artificial intelligence until it passes us by”: a new survey finds that most charities don’t understand how they could use artificial intelligence

The vast majority are open to learning more, with many saying they need more training and specialist staff.

Why It Matters

As artificial intelligence becomes more ubiquitous, the charitable sector is at risk of being left behind if they don’t feel they have the adequate knowledge and resources to learn about AI-based tools and applications.

Photo by Alexander Sinn

This independent journalism on data, digital transformation and technology for social impact is made possible by the Future of Good editorial fellowship on digital transformation, supported by Mastercard Changeworks™. Read our editorial ethics and standards here.

At the end of last year, Furniture Bank – a charity that collects and redistributes lightly used furniture and homeware to those in need on Treaty 13 territory, colonially known as Toronto – raised some collective eyebrows. The charity had made a bold statement, using artificial intelligence to generate images for its holiday campaign. The images – which showed a woman and child in a barren home – had two concurrent goals: to encourage more donations, and to avoid putting families in need in distressing situations by asking them to be part of photoshoots.

“We represent a type of poverty that is invisible in the homelessness space. Millions of people get shelter, but getting the assets to form a home is hard,” says Dan Kershaw, executive director at Furniture Bank. “We’ve seen families using clothes as a bed and crates as tables. All charities want to visualize these challenges, but I can’t in good conscience ask those in destitution to have a photo crew come in.”

Since then, Kershaw and the Furniture Bank team have been tinkering with how else to use artificial intelligence. For example, Kershaw says, there is scope for teaching an AI-driven chatbot all of the questions that the charity is frequently asked by clients and community members. In other words, a chat-based tool on the charity’s website would contain most important information that somebody accessing services would need to know, so that they are able to access critical information at any time of the day, potentially in their native language as well. In comparison, the cost, time and level of complexity required to source multilingual volunteers can be a drain on an organization’s resources.

Furniture Bank’s approach to artificial intelligence is a rare one in the social purpose sector – at the beginning of May, the charity even published a Responsible AI Manifesto, a living document that outlines how it plans to use artificial intelligence in service of its communities, without compromising its core values. “We can’t just refuse to look at artificial intelligence until it passes us by,” Kershaw adds.

“AI is the lowest hanging fruit: it’s free, there is no/low risk, and experimenting is a good way to try it out.”

While Furniture Bank is one of the first organizations in the charitable sector to fully embrace artificial intelligence with a manifesto, the vast majority of the sector feels underprepared to use these technologies in their organizations, according to a survey published by Charity Insights Canada, a project based out of Carleton University. Most also don’t feel that they understand the applicability of artificial intelligence to the sector.

Although this may seem alarming at first glance, this probably isn’t that different from the level of AI literacy and automation in other sectors, including the for-profit sector, says Charles Buchanan, founder and CEO of Technology Helps. “I don’t think [charities] are any less prepared than anybody else. But what they often don’t have is an IT department or function within the organization, where they could reach across the room and just ask what is going on. For-profit companies may not be doing anything with artificial intelligence, but there would be someone in their ranks, like a chief technology officer or a chief information officer, who could articulate the information on it.”

How are charities feeling about the rise of artificial intelligence?

A significant majority of charities surveyed responded neutrally to two important statements posed to them in the survey: “AI will fundamentally change how the charitable sector operates” (38.8 per cent neutral) and “AI is not relevant to the work of our organization” (29.6 per cent neutral). 36.1 per cent also responded neutrally to the statement of AI helping charities to more effectively target their programs and services. Most respondents also felt neutral about the risk of AI perpetuating bias and discrimination, leading to job displacement, and posing a risk to data privacy or security for charities and their clients.

This on one hand does suggest that a lot of charities don’t know enough about artificial intelligence to comfortably feel that they can voice an opinion, but on the other hand, the survey also shows that some do recognize its potential: for example, 77.8 per cent of those surveyed agreed on some level that AI could help them analyze large datasets, while 64.4 per cent agreed that it could help with content creation.

One of the strongest reactions of the charities surveyed was to the suggestion that AI could “reduce the need for human intervention and decision-making in the charitable sector” – 64.7 per cent of those surveyed disagreed. For Kershaw, it’s also vital that organizations bring the communities they serve into their processes of building AI-generated content. For example, he suggests “flagging at the beginning of any content whether or not it was generated with artificial intelligence, and give people the opportunity to provide feedback on how to make it better.”

Most charities are also concerned about the feasibility of implementing AI into their organizations. 76 per cent agreed that “AI could be too complex or difficult to use for smaller or less technologically advanced charitable organizations,” and 74.2 per cent agreed that “AI could be expensive for charitable organizations to implement.” Kershaw adds that this risk aversion isn’t a surprise for the charitable sector. “A lot of conversation is racing to embed AI into the sector, and we aren’t a sector that likes expensive or risky decisions.”

Megan Davidson, the interim lead at the Centre for Social Impact Technology, echoes this. “The social impact sector involves dealing with sensitive and confidential information about clients, including their personal history, health and social circumstances. There may be concerns about the ethical and privacy of such information when using advanced technology, including the risk of data breaches, cyber-attacks, or unauthorized access and the unknown negative impacts on the people they serve.” She adds that a lack of technical expertise and knowledge within the sector can make it a challenge for nonprofits to understand how AI can be used in the sector.

What kinds of support do charities need to better understand and adopt artificial intelligence?

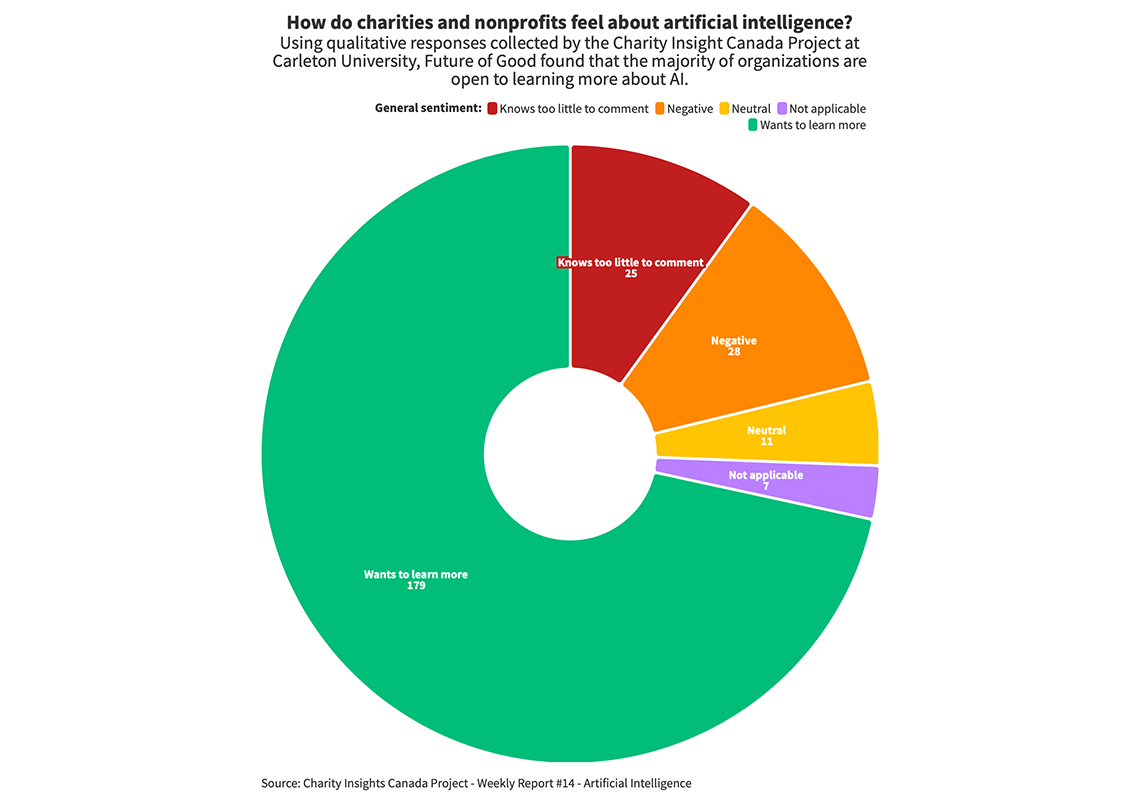

The final question on the survey asked respondents to state “what kinds of support [they] would need to increase [their] knowledge of AI technologies and their potential integration into the work of [their] organizations. Future of Good carried out some data analysis of the qualitative responses, grouping them by sentiment and specific requests from the sector. While the research states there are 404 responses to the question, only 250 were published – of those, 71.6 per cent wanted to learn more, 11.2 per cent felt negatively about artificial intelligence and 10 per cent felt that they knew too little to comment. The remainder were either neutral or didn’t feel the question was applicable to them.

54 per cent of respondents said they needed more training or professional development in order to better understand and integrate artificial intelligence into their work. Respondents mentioned several types of training, including workshops, conferences, case studies, and opportunities to experiment. 14.8 per cent of respondents said that they wanted to learn more about artificial intelligence as a technology itself, while another 14.4 per cent of respondents wanted to understand the opportunities and challenges of applying artificial intelligence in the charitable sector.

“The most important thing for charities to have at this stage is demystification training,” says Buchanan. “Let’s just pause for a second, slow down, and stop screaming about it taking our jobs. What are we dealing with here? It’s predominantly a decision support technology. Once people get their heads around it, it can be simple and straightforward.” Kershaw adds that charities could start by learning specifically how to prompt AI.

A handful of survey respondents also suggested that dedicated IT staff, or exposure to technical experts and consultants, might be required to help them better understand and start to use artificial intelligence tools. But the “ability to retain such a person or skillset would be challenging,” Buchanan says.

“You don’t need AI experts. The technology is expanding at such a rate, and the people at the heart of [artificial intelligence] are in the business of making it accessible or usable.”

Davidson adds that organizations shouldn’t necessarily be looking to hire those with “hard skills” such as data analysis, machine learning and AI programming, but rather looking to facilitate an environment where employees can learn these skills on the job. “Ultimately, the success of AI initiatives will depend on organizations’ ability to balance technical expertise with creativity, collaboration and communication skills.

Rather than hiring new, technical staff, Kershaw suggests building an internal environment for staff that facilitates and encourages experimentation with AI. “In the startup space, there is this idea of failing fast. Everyone is allowed to tinker and come up with ideas.” He suggests that hackathons could be a great way for people in the sector to band together and experiment: “The sector can’t play around if there isn’t a playground,” he adds. He suggests that charities start with the many new AI-based tools that are freely available to them. Examples include Perplexity, an intuitive search engine that provides a user with sources for the information it pulls, and Cohesive, which generates text for social media copy and scripts for video and blog content.

In 8.8 per cent of the responses, charities called for more funding, financial support and grants to help them better understand and implement artificial intelligence. Others cited access to affordable or cheaper technology, upgrades to their technology, and access to better internet connection. While these are undeniably issues that the non-profit sector faces at large, they aren’t necessarily hindrances to accessing artificial intelligence anymore. “There are very few people at the non-profit level that will need robust computation or massive datasets,” Buchanan says. “Developers are making [artificial intelligence] accessible on your browser.” The fact that charities think they need much better technology to access AI suggests that they aren’t up to date with the latest updates in the technology, which make it accessible to ordinary people.

For Kershaw, funding isn’t necessarily the barrier that charities should be concerned about: it’s not often a problem to siphon off a portion of funding to programmers and technical tools, he says. Rather, leaders in charitable organizations who aren’t able or willing to appreciate the potential of artificial intelligence to their work can be more of a barrier. He compares the monthly cost of ChatGPT Plus – $20 a month – to the cost of buying coffee every day.

In a charity, he says, “the CEO is the head of digital, and they can choose whether or not to make digital a priority. It can free up staff time and skillsets.” There are now many more opportunities for charitable organizations to also get involved in crafting the technology by creating and sharing training data that AI learns from. “AI models are actively asking to be taught,” Kershaw says. An example is OpenAI actively requesting users to give feedback on when ChatGPT’s responses didn’t meet their expectations, using that feedback to improve the machine learning algorithm behind the tool. “But the sector is taking a step back and throwing rocks.”

This awareness and education – on how data and the availability and accuracy of it shapes AI – is actually more fundamental for charities to be learning about, Buchanan says. “What charities should be concerned about is something they’re not. Algorithms can become skewed towards larger agencies with more published data […]. More data gets you on the map, but the data they produce can become a part of someone else’s decision making. There is a food chain and [charities] need to be mindful of it.”

Your job. Your mission. Your news.

With your support, the sector you're building gets the journalism it deserves, and you get a tax receipt.