A race to regulate: Canada falls behind as the EU sets AI legislation

After Canada’s failed attempt to pass artificial intelligence legislation, other jurisdictions like the European Union have pushed forward with comprehensive rules. Experts say Ottawa could take lessons from their model.

Why It Matters

Having clear legislation outlining AI rules in Canada could promote responsible development of technology and prevent risks and online harm.

While the Canadian government tries to regulate artificial intelligence (AI) for the second time, experts say they could take lessons from across the pond.

It’s been four years since the failure of Bill C-27, the federal government’s attempted overhaul of its digital privacy and AI policy, leaving the country without a national AI approach.

Yet during that time, the European Union (EU) implemented its own AI legislation.

Canadian policymakers will likely look at places like the EU for possible ideas, according to Paul Samson, president of the Centre for International Governance Innovation.

“They would take elements, but they wouldn’t adopt something holus bolus,” Samson said. “It wouldn’t be an exact fit. But they’re absolutely looking at it.”

Industry leaders have criticized the EU’s policies as being overly restrictive.

“The Europeans do risk squeezing themselves out of the innovation side,” said Samson. “I don’t think the Europeans have got it totally right, but Canada could learn from them.”

Canadian legislation needs a balance between supporting industry innovation and protecting the public, he said.

“Competitiveness does not mean you have zero regulation.”

“If you have smart regulation, you could actually lead on the governance side, as well as encourage firm growth and innovation,” he said.

Lessons from the EU

The EU was the first in the world to pass a comprehensive AI Act in 2024, which classifies AI by risk tiers.

“It’s the first piece of ‘across the board’ AI regulation that’s on the books and Canada sort of tried to emulate the EU, but in a much hastier way, which resulted in Bill C-27 never actually getting adopted,” said Maroussia Levesque, who holds a PhD in law from Harvard University and researches AI governance.

She said the EU model offers lessons such as its use of adaptable legislation that evolves with technology.

“[They’re] building in iterative mechanisms within the law,” she said.

“What is posing risks today is not going to be the same type of systems that will be rolled out by the time laws come into effect,” said Levesque.

The EU uses independent expert bodies to advise policymakers, as well as technical standards that turn principles such as safety and fairness into practical guidance for developers, she added.

“They recognized that policymakers don’t really have the right set of firsthand knowledge. So they have an AI office which has experts, a scientific panel that has many different roles in advising the government that could also raise the alarm on some problematic deployment,” she said.

While these approaches are not without flaws, she said it provides a foundation that Canada could adapt.

“Canada, we’re not a big market like the EU. The EU has strength in numbers because if they deny a company access to their market, that’s a pretty big dent,” said Levesque.

However, critics say adopting all of the EU’s AI regulations in Canada could discourage companies from operating or investing here.

“The biggest element of economic growth will come from adoption,” said Michael Fekete, a technology partner with Osler.

“We have to be very careful not to create impediments to adoption where the cost of compliance can discourage adoption,” he said.

Over-regulation risks putting Canadian businesses at a global disadvantage, he added.

“We want to leverage AI to help generate economic well-being and to remain competitive on a global scale,” said Fekete.

He did agree that flexible legislation is needed. “We need to be in a position to respond to risks if and when they arise,” Feteke said.

“The (EU) AI Act highlights just how hard it is to regulate AI.”

Innovation Science and Economic Development Canada said the government is looking at best practices from around the world.

“The Government also continues to collaborate internationally, including by working with G7 partners, to ensure that responsible approaches and strong safeguards for vulnerable groups are adopted broadly,” a department spokesperson said.

Canada’s AI evolution

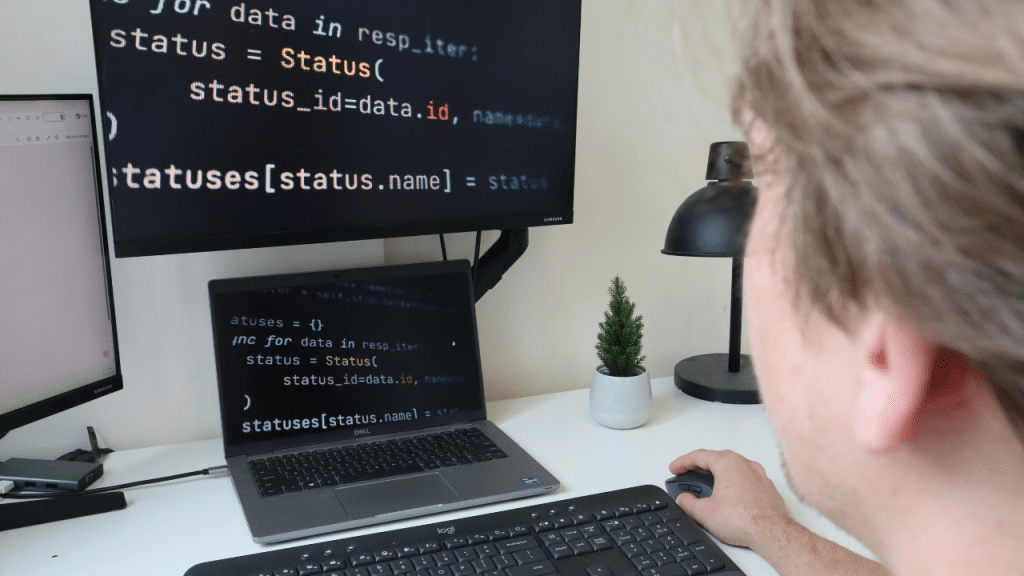

In 2017, the federal government launched the Pan-Canadian Artificial Intelligence Strategy (PCAIS), focused on research and talent.

Five years later, Ottawa proposed the Artificial Intelligence and Data Act (AIDA) as part of Bill C-27, aimed at regulating high-impact AI systems and improving accountability.

The bill died when Parliament was prorogued in January 2025 following Prime Minister Justin Trudeau’s resignation, although some experts say it was unlikely to pass regardless.

“It was more about being seen to be doing something rather than doing something effectively,” said Renee Black, CEO of GoodBot.

The proposed legislation had limited public consultation and didn’t reflect the entire sector, Black said.

“It was a very industry-centric piece of legislation that had very low trust among people in the non-profit sector,” she said.

“Bill C-27 was trying to do too much,” Samson added. “It was more than AI. It was taking on privacy as well. It wasn’t going anywhere because it just wasn’t the right package.”

Since then, the federal government introduced a voluntary code of conduct for generative AI, outlining safety and transparency expectations for companies.

In 2025, Prime Minister Mark Carney appointed Evan Solomon as Canada’s first AI minister and launched a national 30-day consultation to shape a new AI strategy, with more than 11,000 Canadians contributing.

The federal government says a range of existing laws, while not specifically designed for AI, already address some risks, including provisions in the Criminal Code, the Competition Act, financial regulations, and federal privacy laws governing how companies use Canadians’ data.

It’s working to update legislation to address deepfakes, strengthen election rules, modernize privacy legislation and introduce new protections against online harms.

Further delays could create privacy risks, data security breaches and misuse of emerging technologies, Samson said, and create uncertainty for businesses that could decide not to invest.

“It’d be a missed opportunity because like I said before, if you get the governance right, you could be a leader here,” said Samson.

The federal ministry would not share a timeline on when a new piece of legislation could be proposed.

Your job. Your mission. Your news.

With your support, the sector you're building gets the journalism it deserves, and you get a tax receipt.